KILL is one of the common commands that we from the database side work on a regular basis. It would be obvious thinking for some of the SQL users to come up with a statement as “KILL using WITH STATUSONLY is not killing the session”.

The WITH STATUSONLY by the name is going to show us the status of the session ID passed along with the KILL command. Additionally, the status will be shown for the corresponding session ID only when your session is in the rollback status.

For example, just imagine that you killed an ALTER

Let’s check a simple example of killing a session WITH STATUSONLY

I have created a table and imported bulk records into a table as “Kill_test”. After inserting the records into the “Kill_test” table, I will be selecting the data as:

SELECT * FROM Kill_test

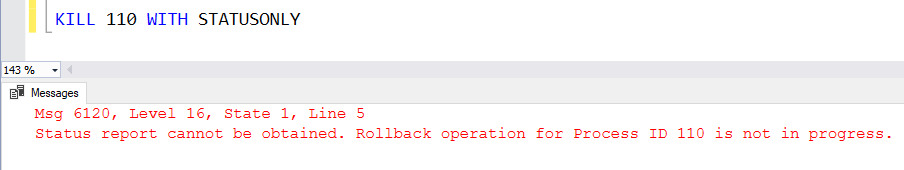

While the data is being selected, simultaneously I will be running the KILL WITH STATUSONLY command as below. In my case, the session ID is 110.

KILL

In this case, we may notice error as mentioned below.

This happens because the KILL (spid) WITH STATUSONLY in our case was a SELECT command.

The WITH STATUSONLY gathers and shows status of the session only when the session is in the rollback state.

Fix and Bottomline:

If you feel that your session is not being killed using the WITH STATUSONLY command, then it’s a misconception of killing the session ID. Please use the KILL (spid) alone to finish the job.

KILL (spid)

Example:

You may also refer: "How to kill a SPID in SQL Server"

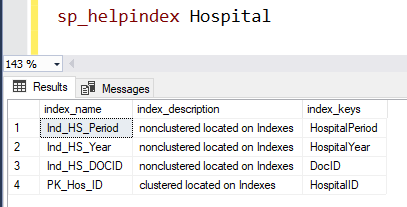

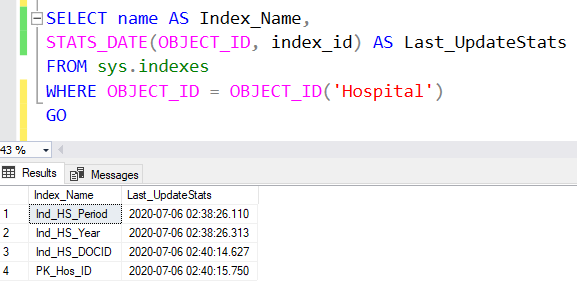

For your testing purposes

Please try to create a clustered index on a heap table (which doesn’t consist of a clustered index) consisting of a huge number of records. Before performing this action, please make sure to run the following in the development environment. After a minute of execution time, kill the session. The killed session would be in the KILLED/ROLLBACK state. Now, once again run the KILL (spid) WITH STATUSONLY to check the current status of the killed session.